|

|

|

An algorithm for distinguishing apples from bananas |

||

| [chongo's home] [Astronomy] [Mathematics] [Prime Numbers] [Programming] [Technology] [contacting Landon] | ||

When the recognition problem is simplified down to distinguishing between just a few objects under controlled conditions, simple but effective algorithms can be used. A simplified image recognition problem allows one to use algorithms that are neither complex nor computationally intensive.

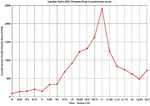

For this paper we will consider the situation where one has a conveyer belt (see figure 1) on which both apples and bananas are placed.

In order to comply with the problem condition of ``limited computational resources'' we will assume the use of a low end computer or PC. For example, one may have only a $1000 PC that is typically found in retail personal computer stores in the later 1990's.

In keeping with the low cost constrains, we will assume that the computer has connected to it a low end black and white digital camera that produces an uncompressed TIFF image. Our solution below does not depend on the camera producing TIFF format images. Any format in which the values of individual pixels can be quickly determined is sufficient. Non-compressive cameras and no significant image processing software is in keeping with the same ``limited computational resources'' problem theme.

We assume that fruit is placed on the conveyer belt so that there is some space in-between each individual fruit. We will assume that there is some mechanism, perhaps a light beam & photocell device, to detect when fruit is under the camera. We will assume that the camera is positioned such that when the fruit detector detects a price of fruit, only that fruit is inside the view of the camera.

We will further assume that the conveyer belt is a low gloss flat black color.

It should be noted that due to the speed of the belt, one has only a small amount of time to made the apple / banana decision. This constraint is in keeping with the problem condition of ``limited decision time''.

It also be noted that we do not assume any particular orientation of the fruit other than it is flat on the conveyer belt. For apples this can mean almost any position where as a banana's orientation is more restricted by gravity.

In the above problem scenario, when the fruit detector determines

that a piece of fruit is under the camera, a picture is

taken and a TIFF image is transfered to the computer's memory.

The transfer of the TIFF image to the computer is detected by

a program.

The program in turn counts the number of set bits and based on

a pre-determined threshold value, determines if the fruit is

an apple or a banana.

|

The image transfer and bit count process can be done fairly quickly. Low end camera images are typically small. Thus the transfer from the camera to computer memory as well as bit counting will not take very long.

An as example, a 413 by 413 pixel TIFF B&W image is typically less than 22k bytes in size. A low end camera takes an image in 1/60th of a second. Given the speed of most video signals, the download time is usually much less than 1/60th of a second. Thus the total camera, download and memory scan time would be no more than 1/30th of a second.

We will assume that the image of a detected fruit has been transfered to the computer (steps 1 thru 4, see figure 2). For purposes of this test, the image is stored on disk and is read in by disting.

In our test, we used a wide variety of apples and

bananas that are commonly found in major produce stores

of the the United States.

While apples

in US markets come in multiple varieties,

bananas in US markets are often of a

single variety.

|

The quality of the fruit selected was typical of a major US produce section of a supermarket. Apples were reasonably ripe and free of spots. Bananas were somewhat green (as is the unfortunate case of most bananas in the US) with few spots.

Produce quality grade apples and bananas vary in terms of color, shape and size. To account for these differences, we selected 2 of each type of apple and banana. With the kind assistance of a local produce vendor, we selected the both largest and the smallest (by weight) of each variety used (see table 1).

Given that we cannot assume any particular orientation of the fruit other than it is flat on on the conveyer belt, we took 2 images of each fruit. In the case of apples (which are reasonably symmetric around the axis of the stem) we imaged them both on their sides as well as on their ends. In the case of the bananas, which lying flat are restricted their sizes, we imaged both sides.

The original images taken were from an 8 bit color camera,

413 by 413 pixels in size.

At the distance taken, the resolution was approximately

20 pixels per centimeter (50 pixels per inch).

Lighting was from behind the camera.

| Type - Name | Large image |

Small image |

|---|---|---|

| 4015 - Red Delicious | image 1 image 2 |

image 1 image 2 |

| 4020 - Golden Delicious | image 1 image 2 |

image 1 image 2 |

| 4103 - Breaburn | image 1 image 2 |

image 1 image 2 |

| 4131 - Fuji | image 1 image 2 |

image 1 image 2 |

| 4133 - Gala | image 1 image 2 |

image 1 image 2 |

| 4139 - Granny Smith | image 1 image 2 |

image 1 image 2 |

| 4153 - McIntosh | image 1 image 2 |

image 1 image 2 |

| 4011 - Regular Cabanita (banana) |

image 1 image 2 |

image 1 image 2 |

We converted the original color images (see table 1)

into B&W images by two means.

One method was by a dithering process, the other

was by a saturation process.

Color to B&W dithering was perform in a standard

fashion by the XV program using its

TIFF dithering method.

The saturation conversion process used a luminosity

step function.

The step was set to the highest luminosity level such

that very few pixels off of the flat back background

(simulated conveyer belt) registered as white pixels.

The luminosity level was set by experimentation on

the reference frame images (pictures without fruit).

| Reference frame | Color image |

Dithered B&W image |

Saturation B&W image |

|---|---|---|---|

| Flat black Conveyer belt |

color | dithered | saturated |

| All white full luminosity |

color | dithered | saturated |

The results of converting table 2 images

into B&W images are found in table 4 below.

| Type - Name | Large dithered |

Small dithered |

Large saturated |

Small saturated |

|---|---|---|---|---|

| 4015 - Red Delicious | image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

| 4020 - Golden Delicious | image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

| 4103 - Breaburn | image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

| 4131 - Fuji | image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

| 4133 - Gala | image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

| 4139 - Granny Smith | image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

| 4153 - McIntosh | image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

| 4011 - Regular Cabanita (banana) |

image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

image 1 image 2 |

| Type - Name | Dithered count |

Saturated count |

|---|---|---|

| 4015 - Red Delicious |

865 (0.507%) 476 (0.279%) 1073 (0.629%) 320 (0.188%) |

2608 (1.529%) 2569 (1.506%) 4065 (2.383%) 1614 (0.946%) |

| 4020 - Golden Delicious |

4456 (2.612%) 3422 (2.006%) 2409 (1.412%) 3022 (1.772%) |

11997 (7.033%) 9660 (5.663%) 8310 (4.872%) 9539 (5.592%) |

| 4103 - Breaburn |

1485 (0.871%) 1636 (0.959%) 1367 (0.801%) 1613 (0.946%) |

6566 (3.849%) 7383 (4.328%) 5377 (3.152%) 5835 (3.421%) |

| 4131 - Fuji |

2820 (1.653%) 2828 (1.658%) 1482 (0.869%) 2342 (1.373%) |

9305 (5.455%) 10145 (5.947%) 5652 (3.313%) 9079 (5.323%) |

| 4133 - Gala |

3018 (1.769%) 1901 (1.114%) 2063 (1.209%) 2321 (1.361%) |

9603 (5.630%) 7117 (4.172%) 7509 (4.402%) 8364 (4.903%) |

| 4139 - Granny Smith |

1654 (0.970%) 1277 (0.749%) 1796 (1.053%) 1040 (0.610%) |

6690 (3.922%) 7433 (4.358%) 6942 (4.070%) 6490 (3.805%) |

| 4153 - McIntosh |

1410 (0.827%) 970 (0.569%) 1415 (0.830%) 2066 (1.211%) |

5140 (3.013%) 4935 (2.893%) 4500 (2.638%) 8224 (4.821%) |

| 4011 - Regular Cabanita (banana) |

8360 (4.901%) 12300 (7.211%) 5699 (3.341%) 6844 (4.012%) |

25946 (15.211%) 29089 (17.053%) 20263 (11.879%) 18603 (10.906%) |

The lowest and highest pixel counts from

table 4 for apples and bananas

for both dithered and saturated images are found

in table 6 below.

| Fruit | Dithered | Saturated |

|---|---|---|

| Apples | 320 (0.188%) min 4456 (2.629%) max |

1614 (0.946%) min 11997 (7.033%) max |

| Bananas | 5699 (3.341%) min 12300 (7.211%) max |

18603 (10.906%) min 29089 (17.053%) max |

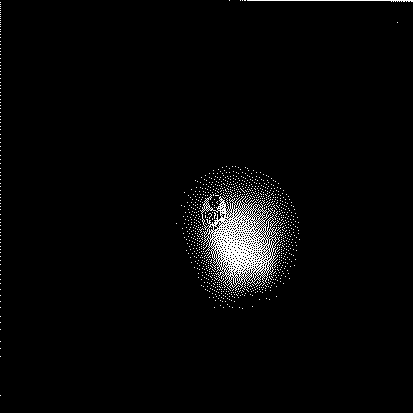

Dithered Apple image with the most white pixels |

Saturated Apple image with the most white pixels |

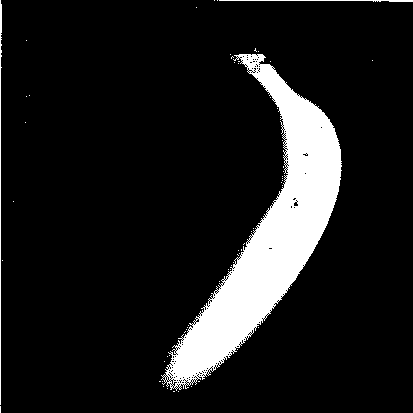

Dithered Banana image with the most white pixels |

Saturated Banana image with the most white pixels |

All of the varieties, sizes and orientations of apples that we tested had notably fewer set pixels than that of the bananas. This relationship held for both dithered and saturated images.

The large Golden Delicious apple produced the most

number of white pixels in saturated images.

However that saturated apple image had only 78.2% of the white pixels of

the smallest banana.

The large Golden Delicious also produced the most

number of white pixels in dithered images.

However that dithered apple image had only 64.5% of the white pixels of

the smallest banana.

However as one can see from table 6, the

typical apple imaged was even more distinct.

Typically

bananas have between

3 and 4 times the number of white pixels

as apples.

| Statistic | Dithered Apple |

Saturated Apple |

Dithered Banana |

Saturated Banana |

Dithered Apple/Banana |

Saturated Apple/Banana |

|---|---|---|---|---|---|---|

| Average | 1876.7 | 6880.4 | 8300.7 | 23475.2 | 4.423 | 3.412 |

| Standard Deviation |

929.7 | 2486.6 | 2880.3 | 4887.9 | (n/a) | (n/a) |

Dithering of images produced the sharpest distinction between apples and bananas. Presumably dithered images would result in fewer mis-identifications errors. But in the worst case that we observed, the apple and banana while pixel count populations were very distinct.

The time to both read the image and count the pixels was so insignificant as to not be measurable, often taking less than 10 milliseconds on a 200 Mhz Pentium or SGI R5k O2.

|

© 1994-2013

Landon Curt Noll chongo (was here) /\oo/\ $Revision: 8.1 $ $Date: 2022/07/07 23:08:23 $ |